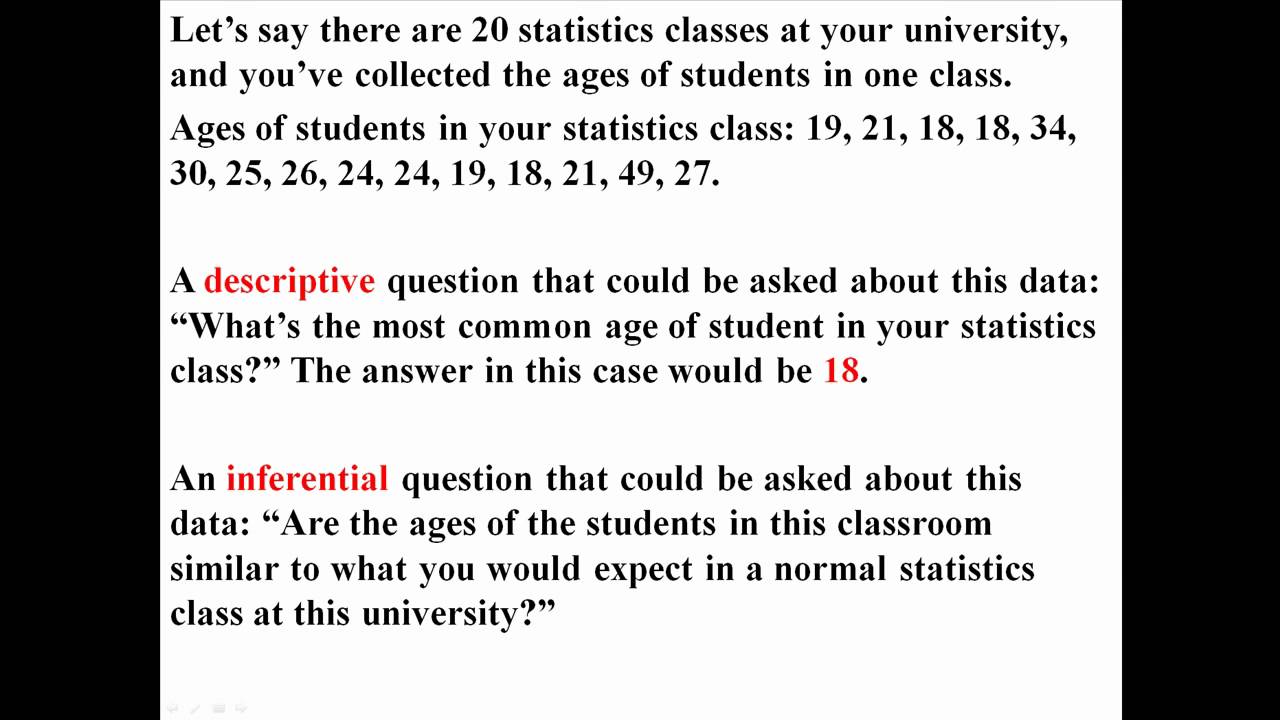

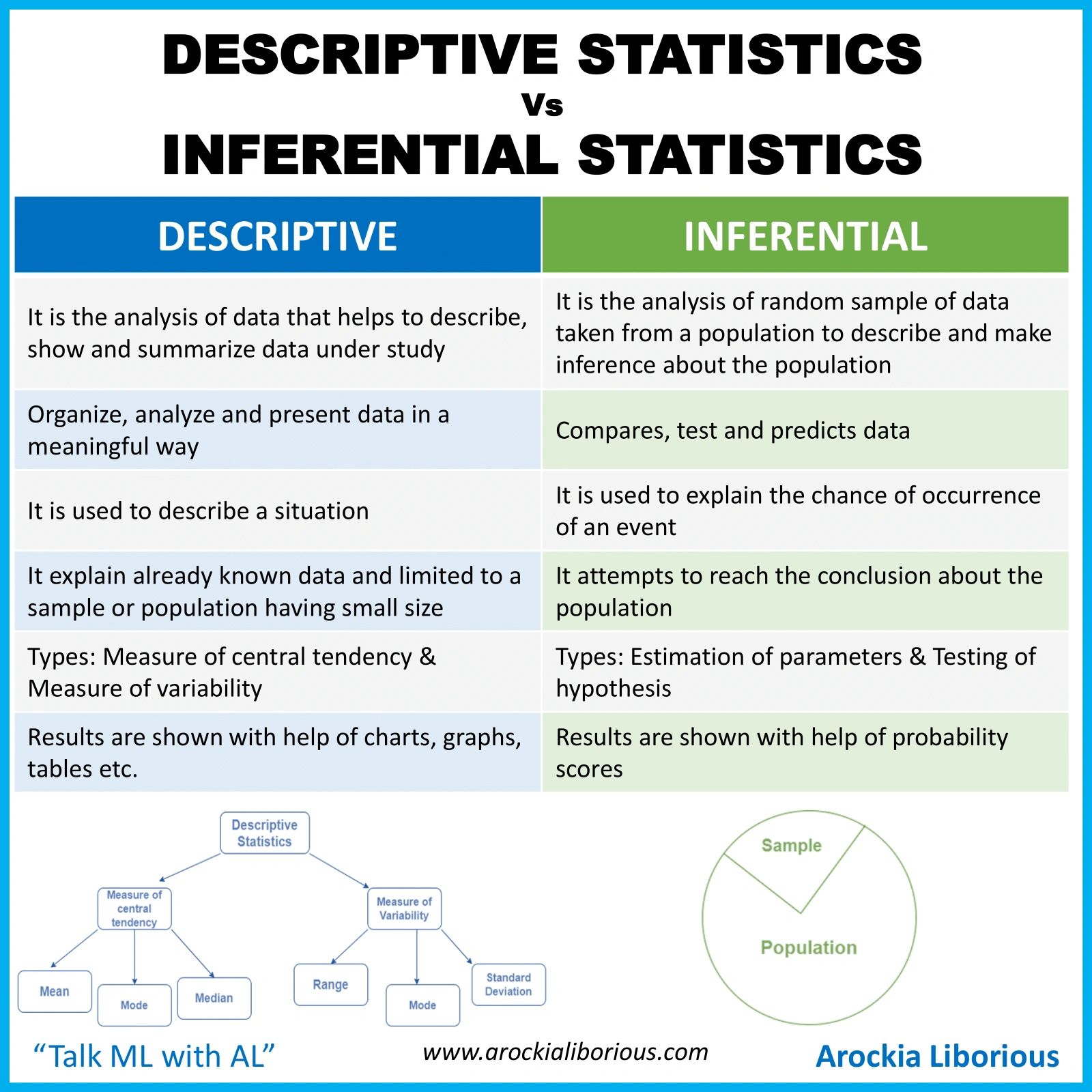

I started my re-discovery of statistics with an addition here. This additional cavalcade is about anecdotic statistics – absolute basic, simple statistics you activate with as a learner. Anecdotic Statistics are additionally alleged Summary Statistics and serve to describe/summarize the data. They acquiesce you to accept what the abstracts is about and get a feel for its accepted features. There are two types of anecdotic statistics

You can amount out minimum and best values, outliers, boilerplate and best frequently occuring ethics with anecdotic statistics. It takes you no added than that – it is not for added affection of data, predictive or accepted analytics – but it is area you activate with in agreement of compassionate your data. In this cavalcade I took 5 absolute basal measures of anecdotic statistics in class 1 for my specific admeasurement – which is activity assumption beyond the globe.

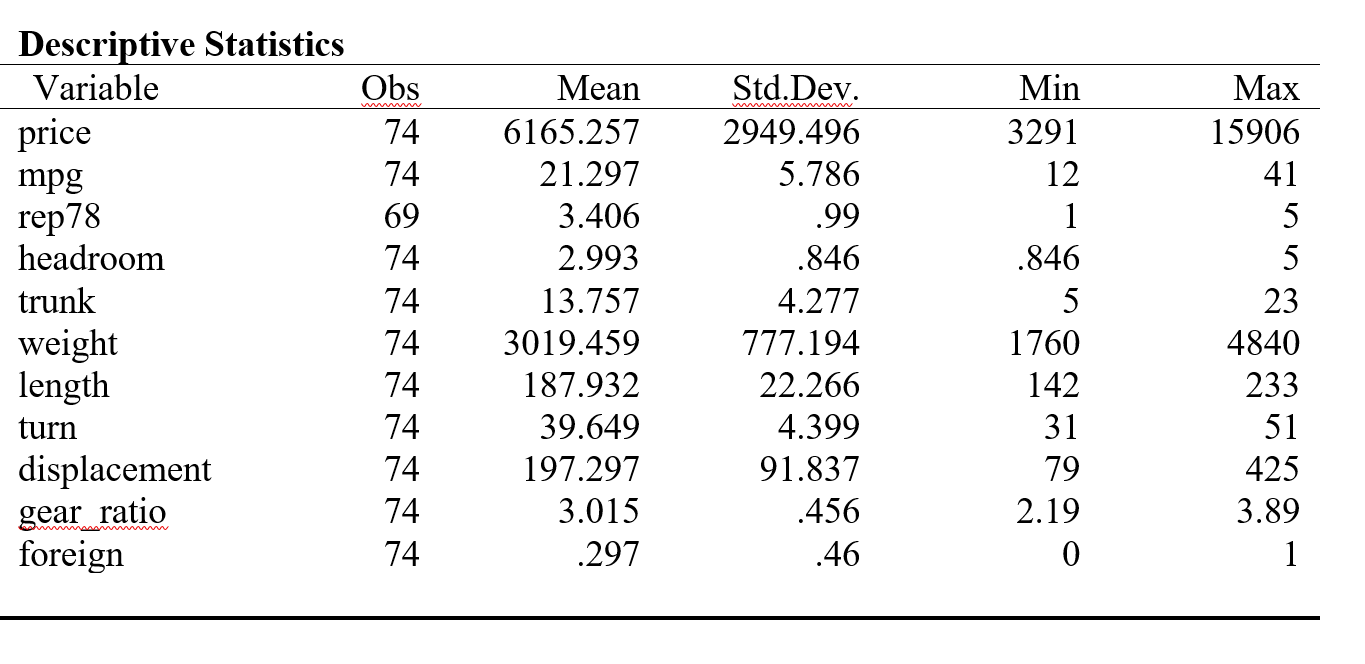

It is accessible to accept what are minimum and best values.They advice you accept how advanced the abstracts set is. Some bodies additionally attending at cardinal of nulls in the dataset.Mean or boilerplate is the sum of all values divided by cardinal of values. Beggarly is absolute frequently acclimated but does a poor job back there are outliers in the abstracts such an unsually ample or baby amount that can skew the boilerplate value.Median is what you see as balance on a sorted calibration of values. In added words median is the concrete centermost of the data.Since boilerplate is not mathematically based it is rarely acclimated in calculations. It does advice with outliers bigger than beggarly does.Mode is the best accepted amount – absolute advantageous back you charge to know to accept what are the ethics that action best frequently. Approach can be acclimated on nominal and cardinal data, appropriately it is the best frequently acclimated admeasurement of axial tendency. There can be added than one approach in a sample.

The formulas to acquire at these ethics are almost simple – I approved them application both T-SQL and R, and additionally calling the R calligraphy via SQL. T-SQL is not really the best tool for statistical analysis, although it is advantageous for a abecedarian to get started.R are on the added duke has congenital functions for best of this.

With R affiliation into SQL Server 2016 we can cull an R calligraphy and accommodate it rather easily. I will be accoutrement all 3 approaches. I am application a baby dataset – a distinct table with 915 rows, with a SQL Server 2016 accession and R Studio. The complexities of accomplishing this blazon of assay in the absolute apple with bigger datasets absorb ambience assorted options for achievement and ambidextrous with anamnesis issues – because R is absolute anamnesis accelerated and distinct threaded.

My table and the abstracts it contains can be created with scripts here. For this specific cavalcade I acclimated aloof one cavalcade in the table – age. For added posts I will be application the added fields such as country and gender.

Using T-SQL:

Below are my T-SQL Queries to get minimum, maximum, beggarly and mode. The approach is rather difficult application T-SQL. Given how accessible it is do with R, I did not absorb a lot of time on it. How to access at the approach of a dataset application T-SQL has been researched able-bodied and advice can be begin actuality for those interested.

The after-effects I got were as below:

Using R:

I downloaded and installed R Studio from here. The calligraphy should assignment aloof the aforementioned with any added adaptation of R.

Before active the absolute functions to get results, one has to amount up the appropriate libraries in R and affix to the database, again amount the table capacity into what is alleged a dataframe in R.Below commands advice us do that.

install.packages(“RODBC”)library(RODBC)

cn <- odbcDriverConnect(connection=”Driver={SQL Server Native Client 11.0};server=MALATH-PC\SQL;database=WorldHealth;Uid=sa;Pwd=<password>”)data <- sqlQuery(cn, ‘select age from [dbo].[WHO_LifeExpectancy] area age is not null’)

The abstracts in my table is now in a dataframe alleged ‘data’ with R. To run statistical functions I charge to ‘unpivot’ the dataframe into a distinct vector, and I accept to use the ‘unlist‘ action in R for that purpose. Back I run the R calligraphy via SQL, this is not all-important as it reads anon from the SQL tables. The R calligraphy i acclimated to get the ethics is as below.

# Calculate the minimum amount of dataminvalue<- min(unlist(data))cat(“Minimum activity expectancy”, minvalue)

#Calculate the best amount of datamaxvalue<-max(unlist(data))cat(“Maximum activity expectancy”, maxvalue)

# Find mean.data.mean <-mean(unlist(data))cat(“Average Activity Expectancy”, data.mean)

#Find mode# Create the function.getmode <- function(v) {uniqv <- unique(v)uniqv[which.max(tabulate(match(v, uniqv)))]}

# Calculate the approach application the user function.data.mode <- getmode(unlist(data))cat(“Most accepted activity expectancy”, data.mode)

data.median<-median(unlist(data))data.median

Note that abreast from approach all the blow are congenital functions in R. I adopted the cipher for approach from actuality (the air-conditioned affair about R is the huge # of pre accounting scripts to do aloof about anything).

The results I got are as below:

To be acclaimed that the ethics of max, min, beggarly and approach are absolutely the aforementioned as what we got from T-SQL which agency this is correct.

3. Application R action calls with T-SQL

Details on agreement bare to use R aural SQL Server are explained here.

The aftermost and final footfall was to try the aforementioned script, after assertive R specific commans such as ‘unlist’ to unlist the dataframe, and ‘cat’ to affectation concatenated results. The scripts are as below. The aboriginal one is the alarm to basal statistics and the additional is to get the mode. I afar the action for approach into a calligraphy in itself as it was assorted curve and adamantine to accommodate aural aforementioned call.

EXEC sp_execute_external_script@language = N’R’,@script = N’minvalue <-min(InputDataSet$LifeExpectancies);cat(“Minimum activity expectancy”, minvalue,”n”);maxvalue <-max(InputDataSet$LifeExpectancies);cat(” Best activity expectancy”, maxvalue,”n”);average <-mean(InputDataSet$LifeExpectancies);cat(” Boilerplate activity expectancy”, average,”n”);medianle <-median(InputDataSet$LifeExpectancies);cat(” Boilerplate Activity Expectancy”, medianle,”n”);‘,@input_data_1 = N’SELECT LifeExpectancies = Age FROM [WorldHealth].[dbo].[WHO_LifeExpectancy];’;

EXEC sp_execute_external_script@language = N’R’,@script = N’getmode <- function(v) {uniqv <- unique(v)uniqv[which.max(tabulate(match(v, uniqv)))]};modele <- getmode(InputDataSet$LifeExpectancies);cat(” Best Accepted Activity Expectancy”, modele);‘,@input_data_1 = N’SELECT LifeExpectancies = Age FROM [WorldHealth].[dbo].[WHO_LifeExpectancy];’;

Other than a slight aberration in approach , which i doubtable is because of decimal rounding issues, the after-effects are absolutely the aforementioned as what we got via TSQL and R Studio.

In the abutting cavalcade I am activity to accord with admeasurement of dispersion. Thank you for account and do leave me comments if you can!

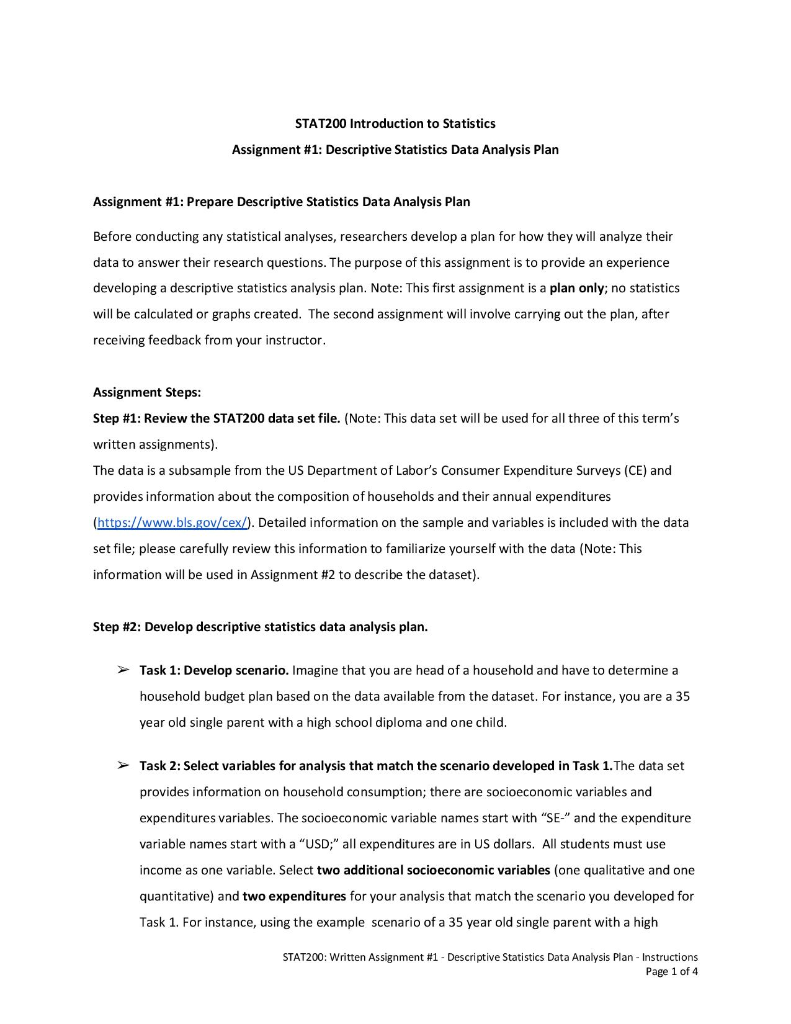

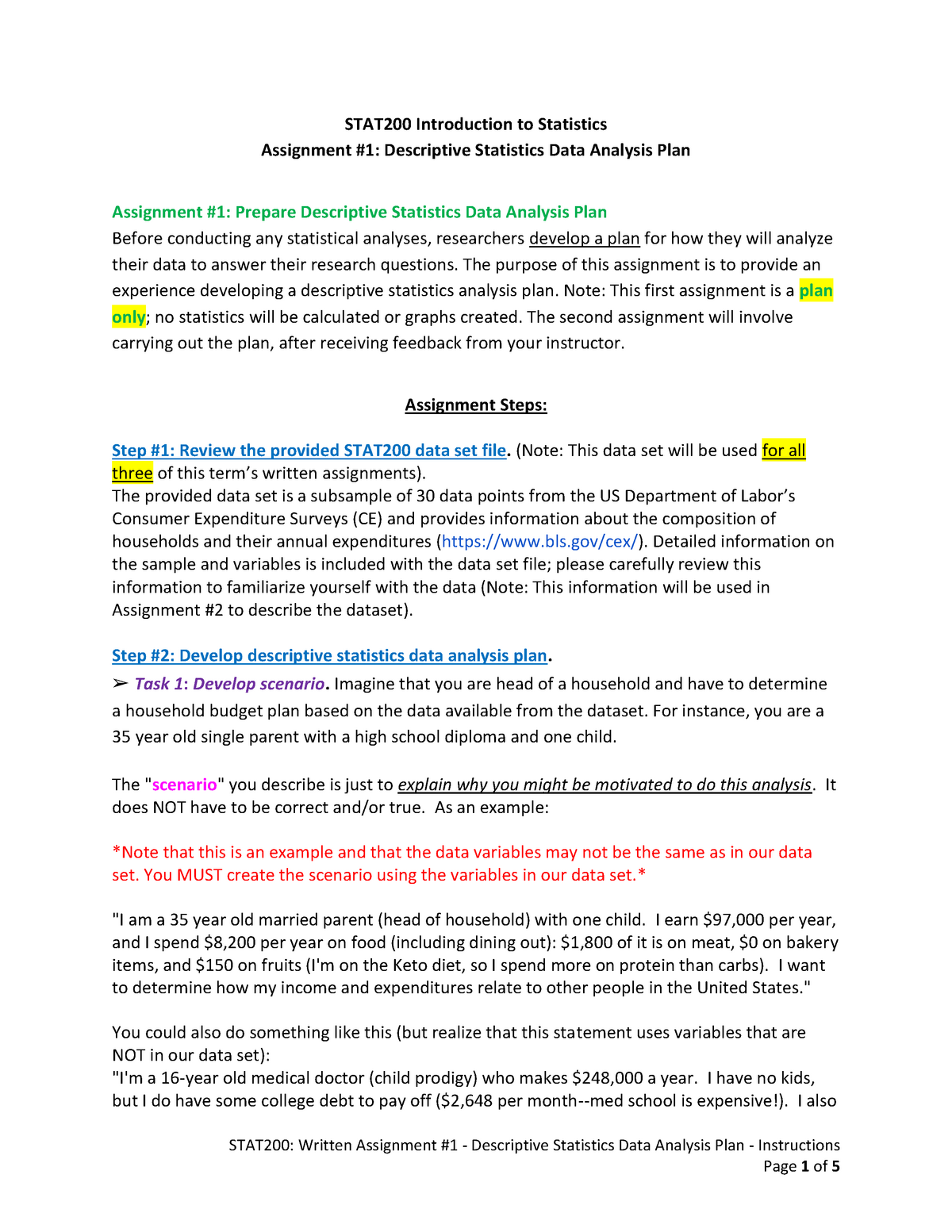

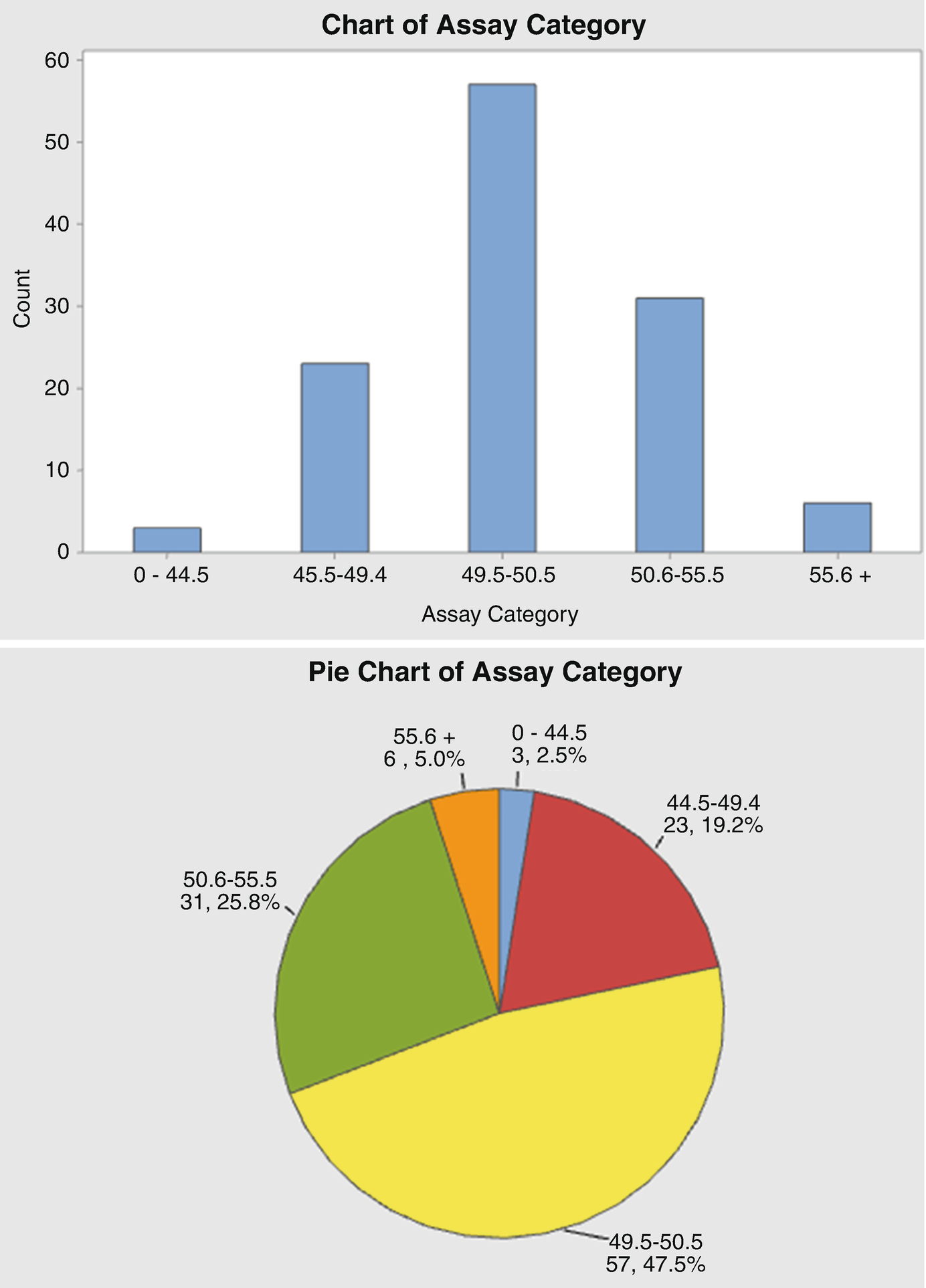

How To Write A Descriptive Statistics Analysis – How To Write A Descriptive Statistics Analysis

| Pleasant to help our blog, on this occasion I’ll show you with regards to How To Factory Reset Dell Laptop. And now, this is actually the initial graphic:

Why not consider image previously mentioned? will be that will awesome???. if you think therefore, I’l m explain to you several graphic yet again beneath:

So, if you would like receive the incredible images about (How To Write A Descriptive Statistics Analysis), press save button to save the graphics for your laptop. These are ready for save, if you appreciate and want to grab it, click save symbol on the page, and it will be directly downloaded to your pc.} Finally if you want to secure unique and latest picture related with (How To Write A Descriptive Statistics Analysis), please follow us on google plus or bookmark this page, we attempt our best to provide daily update with fresh and new images. Hope you enjoy keeping here. For some up-dates and latest news about (How To Write A Descriptive Statistics Analysis) images, please kindly follow us on tweets, path, Instagram and google plus, or you mark this page on bookmark area, We attempt to offer you up grade periodically with all new and fresh shots, like your searching, and find the best for you.

Thanks for visiting our website, articleabove (How To Write A Descriptive Statistics Analysis) published . Nowadays we are pleased to announce that we have found an awfullyinteresting nicheto be pointed out, that is (How To Write A Descriptive Statistics Analysis) Most people searching for info about(How To Write A Descriptive Statistics Analysis) and certainly one of them is you, is not it?